Maaratech Fieldtrip to Nelson

Part of the Maaratech research team travelled to Hope, Nelson in late November 2021 to collect data from an apple orchard. The team, consisting of Oliver Batchelor, Josh McCulloch and Max Andrew, ran the robot Conan up and down the rows of espaliered apple trees at Hoddys Orchards.

The camera array and robotic arm were both utilised. The arm has cameras on the end which scan the leaves, branches and apples in a circular motion, starting underneath the branch and moving around in a curve with data recorded onto the computer.

With espaliered trees the apples, branches and leaves are literally in one plane and are able to be scanned easily by Conan the robot. The hope is that machines like Conan will eventually be developed as a commercial robot to thin fruit as well as pruning and other tasks in the orchard.

Professor Richard Green wrote a report on this fieldtrip:

Nelson field trip to Hoddys Orchard

Transported Conan, UR5 robot arm and LAL leaf blower.

Set up a new laptop and did some maintenance on calibration/stereo inference code for running Auckland’s robotic arm scanning code – and successfully collected data with stereo cameras on the UR5.

Stereo training framework:

– Data loaders for a wide range of public datasets (and our own Maaratech datasets)

– Configurable augmentations (some slightly novel ones like pasting one dataset on top of another and trapezoid warping).

– Separate benchmarking methods for feature extraction, cost volume creation and 3D filtering

(The reason for focus on benchmarking is to establish a happy medium in terms of performance and quality, aside from HSMNet (which we currently use) none of the existing works is remotely in real-time for higher resolution images.) To improve the robustness/accuracy, we have captured some multi-view datasets (botanic gardens) and working on methods on how to best capture data for easiest reconstruction.

Better quality reconstructions on a variety of scenes, looking at using the sparse point cloud for better initialisation and establishing priors for ray sampling. Potential use of NeRF variant focused on surface reconstruction to avoid noisy surfaces.

Skeleton extraction with Harry Dobbs we are focused on estimating local radius and branch direction, for the purposes of using this to disambiguate overlapping branches during skeletonization. i.e. skeletonization becomes a very simple last step with this information

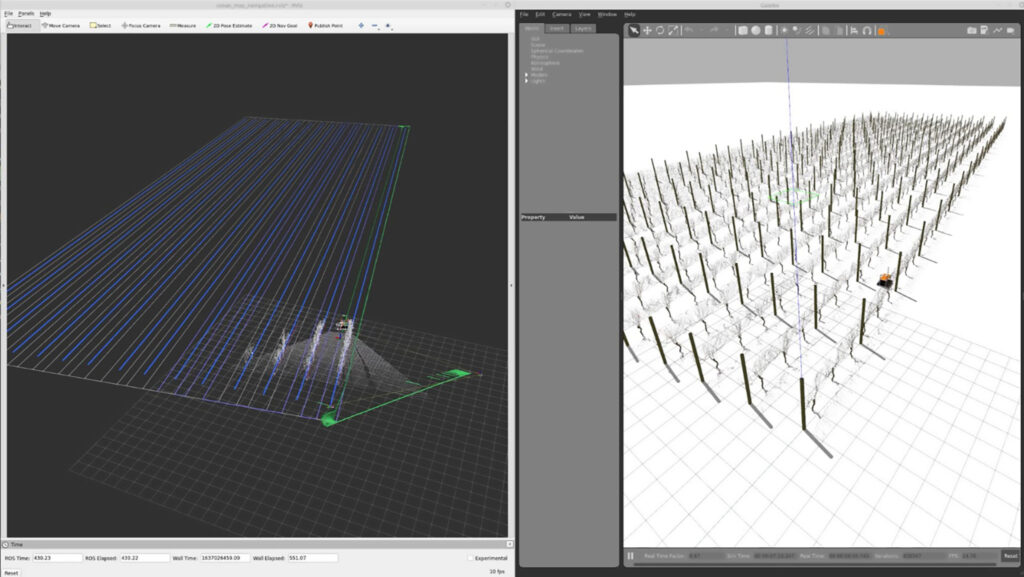

Maps of vineyards and orchards

We have developed a tool for quickly creating maps of vineyards and orchards. These maps can then be exported in our standard map format for uses in the path planner on Conan and in gazebo simulations:

As an example, the robot has been instructed to scan rows 1,2,5, and 6 – where the path planner has been updated.

Another map contains two blocks (in different colours), where Conan has been instructed to scan one row from each block. After scanning the first row Conan navigates to the second block.

Check out photos of our UC Computer Vision Research team and Conan the robot in action.